Table Of Content

The Spectrum 2 design for the Adobe Acrobat home page on web provides a flexible and adaptable illustration system across icons, spot illustrations, and banners. It also takes a modular approach to grouping parts of the interface and brings a sense of depth through layered colors and subtle shadows. The first version of Spectrum was minimal, with a gray color palette designed to recede. Spectrum 2 reimagines icons, typography, and more to make the Adobe experience more approachable and bolder in design. One thing that hasn’t improved since the previous generation of Firefly AI is the resolution of the images created. Whether you’re generating images from scratch or generating new backgrounds, the generated image will top out at around 1,500 x 1,500 pixels in size, which could make it difficult to use generated images at full-page size in magazines, for example.

Product

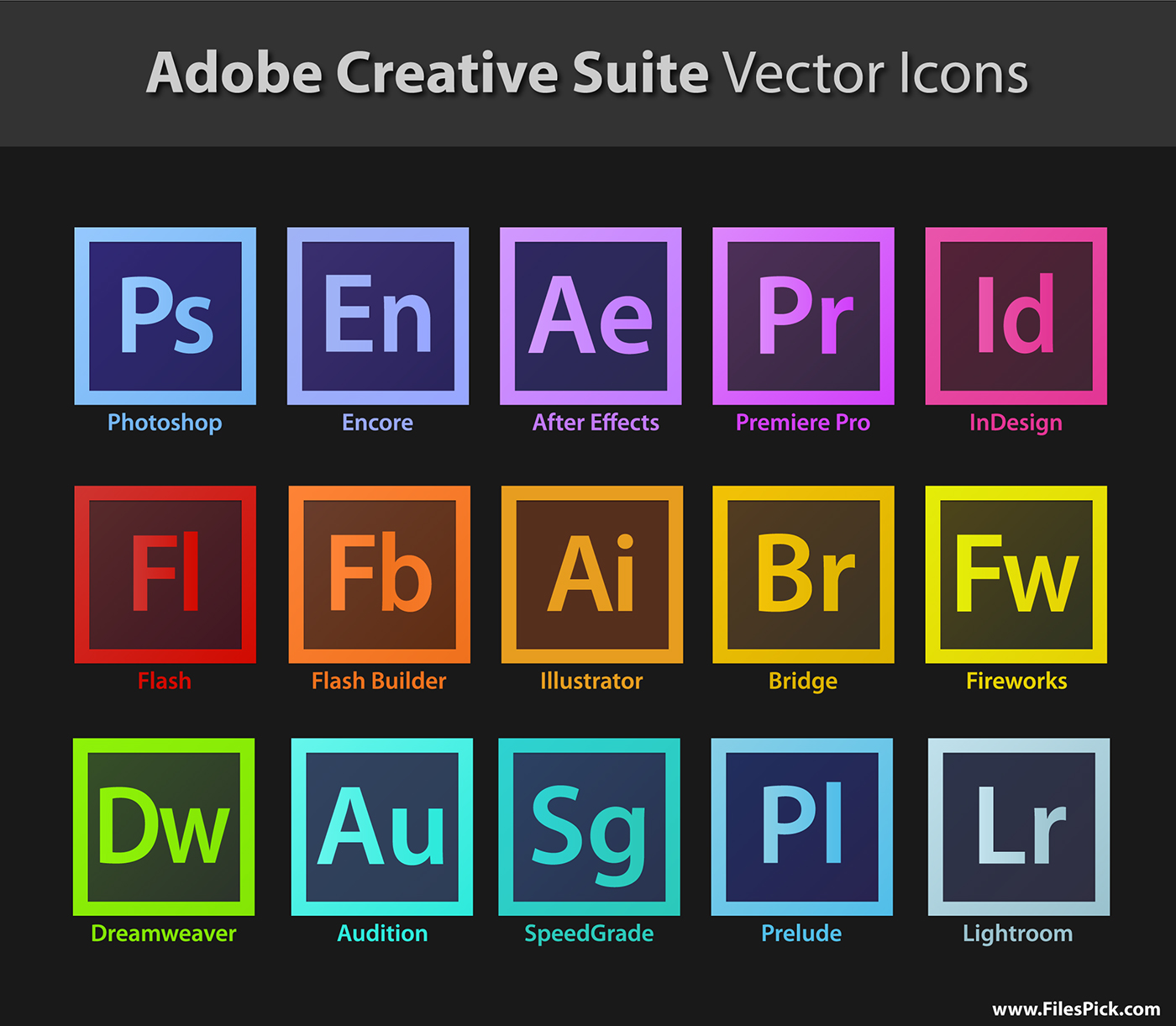

Under the hood it was still Spectrum, but multiple small changes made a big difference. Meanwhile, a small team at Adobe had started to think about the future of our products. What if we could create some sort of language that could unify our product portfolio? That portfolio had just gone through some fundamental changes, the most significant of which was the shift from Creative Suite to Creative Cloud. Mobile devices were becoming powerful enough to support our products and building our tools on the web finally felt realistic. It took years of hard work, collaboration, and perseverance by thousands of people, but Spectrum eventually made its way across our product line.

Our disciplines include:

Those building blocks are used by designers and engineers to craft consistent experiences at scale. They bridge the gap between pictures and implementations, create a common language between disciplines, and provide a pathway for synchronized future updates across multiple surfaces and frameworks. The brand design team developed a new toolkit of geometric shapes that can be combined in infinite ways to create illustrations, banners, and other assets. Aligning Spectrum 2’s color system with brand colors makes it easier to harmonize interfaces across products.

How Adobe Design is shaping the Firefly generative AI experience

All three have been vastly improved, along with the overall quality of generated images. He said there are three ways the company could improve the resolution of generated images. Arguably the most standout new AI feature in Photoshop is the ability to generate images from text prompts from scratch. Previously, you could add elements to existing images, but now Adobe is allowing customers to start with a blank canvas and simply type a text prompt describing the image they want the AI to generate.

Exploring New Generative AI Features in Adobe Creative Cloud - Chapman University

Exploring New Generative AI Features in Adobe Creative Cloud.

Posted: Wed, 24 Apr 2024 00:54:39 GMT [source]

Automatic background removal has been a feature of Photoshop for some time, but now customers can generate AI replacements. So, for example, you may have a photo of a dog lying on grass, then select the option to remove the background and generate a beach surrounding. Adobe is also adding generative AI features to other apps in its portfolio, including Lightroom and the publishing package InDesign. To try out Text to Image update InDesign now, or experiment with it — and all of Firefly’s capabilities — on firefly.adobe.com. In this article, we’ll explore how you can use Text to Image to boost your productivity and also take a look at a few other new features that will enhance your InDesign workflows. The first updates will roll out across Adobe web products in early 2024.

He has been integral to the growing immersive entertainment space, both as a creator and a thinker – speaking at conferences around the world. His immersive content credits span the gamut of live-action, CGI, and real-time experiences for clients such as Intel, Adobe, USA Today, Google, GE, Disney, USA Network, Visa, Las Vegas, and many others. Andrew has also directed commercials for Disney, LG, VIZIO, Google, Target, Barco, and M&M’s. He has also done several traditional and VR projects featuring his own narrative and creative development. Adobe launched a limited beta for Firefly this week that showcases how creators of all experiences and skill levels can generate high-quality images and text effects. Learn more about Firefly and sign up to participate in the beta at firefly.adobe.com.

Experience Cloud

Spectrum 2 will supply specific variants for each, making Adobe tools feel “at home” on the device and platform where you want to use them. When Spectrum was first designed, mobile platforms were evolving rapidly and the user experience from app to app varied wildly between operating systems, so we initially leaned into making Spectrum as consistent as we could across platforms. Today, every platform has strong conventions that we want to follow more closely, so Spectrum 2 needs to supply specific variants for each. Design systems provide the fundamental vision upon which those very principles, components, and patterns are built.

As a centralized design organization we help shape products across Adobe.

The beta, which people can use in a standalone website, will allow Adobe teams to learn from user feedback. Firefly-powered features will eventually be integrated into Adobe tools across Creative Cloud, Document Cloud, Experience Cloud, and Adobe Express. The biggest difference between Spectrum and Spectrum 2 is, well, all the little things. We’ve made hundreds of adjustments to the system and when combined they’ll create a big and very noticeable shift for the people who use our tools.

Experience Design

Accessibility is an important topic for anyone working in digital and print publishing. InDesign cloud documents can be accessed online or offline directly from within the app, on any device where InDesign is installed. In addition to Text to Image, we’re also introducing a few other innovative and much requested features that will make your experience of using InDesign even better. In InDesign’s Text to Image panel, type a simple text prompt or select one from the sample prompts to generate an image in seconds. To take advantage of this game-changing new generative AI feature in InDesign directly inside the app, you can use either the Contextual Task Bar or the Text to Image panel.

Adobe’s Brand Design team created an updated illustration system for Spectrum 2. Spectrum 2 will follow each platform’s conventions, from mobile (left) to mixed reality (right). We fuel our creativity with process, solve problems that seem insurmountable, and design to remove complexity. The third method would be to generate images piece by piece instead of as one complete image. “We’re looking at all three of those [methods],” Greenfield claimed.

Spectrum 2 will accommodate a wider range of personal preferences and needs, for example, by offering customizable density and contrast options (shown above), making Adobe products more accessible and inclusive. Spectrum 2 needed to meet a high bar for accessibility so the widest possible group of people can use Adobe tools. The World Health Organization estimates that 2.2 billion people around the world experience some form of visual impairment. For Spectrum 2, our definitive starting point for making our web tools more inclusive and accessible is the Web Content Accessibility Guidelines (WCAG) — which Adobe also contributes to.

The Spectrum 2 design for the Creative Cloud “Your files” page has clearer visual hierarchy and a lighter, brighter, more expressive and approachable appearance. To support the vision of Spectrum 2, our team made changes across multiple underlying components in our design system. This includes rethinking icons to be more clean, friendly, adaptable, playful and familiar. When it was first released, faces were often a disfigured mush, hands looked deformed and text was impossible to render.

No comments:

Post a Comment